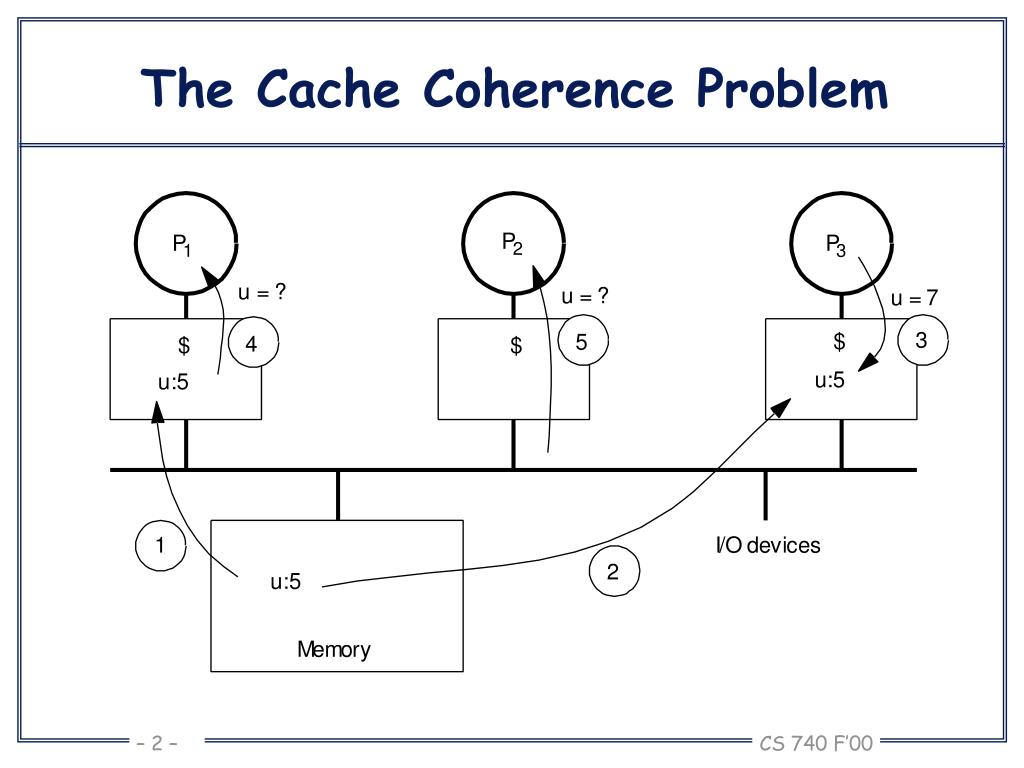

A great deal of memory performance (bandwidth and latency) depends on the snoop protocol. The interesting thing is that with today’s multicore CPU architecture, cache coherency manifest itself within the CPU package as well as cache coherency between CPU packages. To ensure that the local cache is up to date, the snoopy bus protocol was invented, which allowed caches to listen in on the transport of these “variables” to any of the CPU and update their own copies of these variables if they have them. This means that a memory system of a multi-CPU system is coherent if CPU 1 writes to a memory address (X) and later on CPU2 reads X, and no other writes happened to X in between, CPU 2 read operation returns the value written by CPU 1 write operation.

Therefore, it must be assured that the caches that provide these variables are also consistent in this respect.

The term “Cache Coherent” refers to the fact that for all CPUs any variable that is to be used must have a consistent value. However, a great deal of the efficiency of a NUMA system depends on the scalability and efficiency of the cache coherence protocol! When researching the older material of NUMA, today’s architecture is primarily labeled as ccNUMA, Cache Coherent NUMA. Locating memory close to CPUs increases scalability and reduces latency if data locality occurs. Unfortunately, the importance of cache coherency in this architecture is mostly ignored. When people talk about NUMA, most talk about the RAM and the core count of the physical CPU.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed